Remember the Y2K computer crisis? Of course you do. Well, there is another one coming our way, and it could be even worse. It is the Unix time rollover problem (aka Y2038), and it could cause hundreds of thousands of computers to crash at about 3am UTC on January 19, 2038. Seriously? Yes! You can’t make this stuff up. After my prior posting on Internet time, we need to better understand the origin of time on the Internet and why it will blow up in a short 14 years from now.

First of all, what is Unix (pronounced “yoo’ nix”)? The simple answer: it is a computer operating system developed by a small rogue research group at AT&T Bell Laboratories in the late 1960s & early 1970s whose descendants and variants have become ubiquitous since then. An ‘operating system’ is the software that powers a computer, like Windows. The Unix offspring (e.g., Linux is one) are the operating systems that power almost every computer that is not run by Microsoft Windows, including Android and iPhones, Macbooks, about 90% of computers that run “the cloud”, nearly all network routers, the world’s 500 fastest supercomputers, and most anything else that is controlled by a computer that is not a desktop. It is the preferred engine for research institutions and small electronic devices.

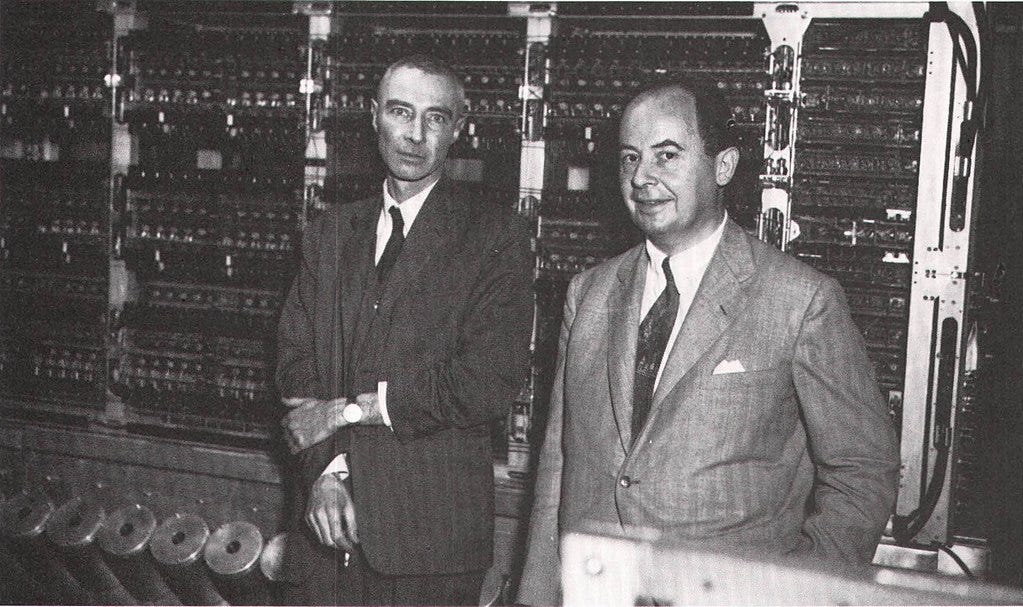

Two individuals get most of the credit for the design of Unix: Dennis Ritchie and Ken Thompson. Ritchie grew up in Summit and went to Summit High, then Harvard, and his father was a researcher at the labs. (I had a friend at Lincoln Elementary School in Summit, Alan Herring, whose father also worked at the Labs, and my dad made fun of Alan’s dad for not always wearing socks that matched. I’m not sure now if this even was true, but what can I say? My dad was a lawyer.) Dennis Ritchie was 11 years older than I am, so we never crossed paths in Summit.

The story that I heard at the Labs about the origin of Unix was that these two guys, Ritchie and Thompson, had an office at the Piscataway NJ Labs facility and wanted to submit remote computing jobs to the Murray Hill facility where the main computing center was. There was no easy way to do this without driving there (at least a half hour each way, lugging heavy boxes of punch cards), so they commandeered an unused computer and took two months off from whatever their “real” job was to write an operating system for it – from scratch – that they liked better than the one it came with. This way they could still use the mainframe without having to drive to it. And they also took the time to write a computer language to write the operating system in. No one would do this today; it’s ridiculous to think about it.

If you Google ‘Unix History’ you get a somewhat different perspective. According to what now pops up, Ritchie and Thompson had been assigned to a US Government project called Multics, with multiple innovative goals to create a modern time-sharing system. It ran on a giant mainframe machine, a GE 645. AT&T was one of the participants in this Department of Defense ARPA project, and decided to pull out because the result was not providing the type of system that the company was looking for, and so the two amigos lost access to their GE 645. The Multics project continued on without Bell Labs staff.

Side note: I had some experience programming the Multics system in my early history as a programmer for a project funded by the Rome Air Development Center, the Air Force’s R&D unit. I thought it was unwieldy at the time (all the variable names were 30-50 characters long, for example).

So, our two researchers, who were now free to work on something else besides Multics and must not have had any actual work assignment, thought that they might write a software system that would be simple but still embody some of the good features of Multics. It became known as Unix, a play on words as a single-user derivative of Multics. They wrote the first version on a DEC PDP-7 “minicomputer” that some other researchers had abandoned, and so was free to them, but modest in computing power.

The teletype model 33 keyboard and printer, above right, can be seen in 1960s movies, like ‘Three Days of the Condor’ with Robert Redford, which also featured a PDP-8. Standard PDP-7 memory was 9kb, which is 1/28 millionth of what my iPhone has. (I bought the smaller model iPhone.) Also, the PDP-7 standard configuration, in current dollars, would cost $700,000, which is more than I paid for my phone. 120 of these PDP-7s were sold, considered to have been “highly successful” by the Digital Equipment Corporation (DEC) at the time.

Side note: I interviewed for a job in 1980 at DEC in Maynard, MA, and did not take it because I thought the people who interviewed me were nuts. I ended up at Bell Labs.

After a bit of refinement, Unix spread like wildfire because AT&T was a regulated telephone monopoly that was not allowed to sell things their employees invented that were not part of the national telephone system. So they basically gave Unix away under licensing that allowed free use, as well as the right to customize it to better meet the needs of whoever was using it. It’s everywhere.

I checked my home Internet routers: the tall white one, Verizon’s, runs “Lighty”; the rectangular one underneath it runs “FreeBSD 14”; and the flat one with 3 antennas runs version 2.6.36.4brcmarm, which are all variants of the original Unix, customized to be embedded in network devices.

Which brings us, finally, to the epochalypse. On a computer running Unix or its derivatives, time is measured by the number of seconds since midnight UTC January 1, 1970. Recall that this was an internal hobby project of two guys who didn’t have enough to do, not ever intended to be an AT&T product, and so no one expected it to still be around more than a few years.

The original Unix computer did not have much memory, as memory was much more expensive back then. And I’m sure you recall from earlier in this blog that the total amount in the PDP-7 was only 9,000 8-bit bytes, or 9kb. Go back and check if you have forgotten; like Chekhov’s gun, I threw in a reference to the PDP-7 for just this reason. Unix represented the counter that kept track of the number of seconds since 1/1/70 as a 32-bit binary number.

I’m coming to the fun part! Binary arithmetic! Digital computers ultimately store everything (even cats playing the piano) as a series of 1s and 0s. This has become hard to believe with AI and everything, but it was true in 1970 and is still true today. A single 1 or 0 is called a ‘bit’, short for ‘binary digit.’ 8 of them are called a ‘byte.’ Don’t ask. There is no word for 9 or 10 of them, or any other number of them until you get to 1,000; then it is called a ‘kilo-‘.

In our red-white-and-blue All-American decimal system, each digit can have 10 different values, 0-9, with each position representing 10 times the previous one. We all should have learned this in fourth grade. In binary numbers, each digit can have only 2 values (1 or 0), and each position represents 2 times the previous one. So here is a quiz – no cheating! Match the binary number with its American value. I’m giving you one really easy one to build your confidence:

| Binary number | Equivalent All-American / Decimal number |

| 000 | 0 |

| 010 | 4 |

| 100 | 3 |

| 011 | 2 |

Did you get them all right? Hooray! Or did you cheat, and just skip the quiz? It was the honor system. I don’t want to know if you cheated.

It turns out that you can add binary numbers just like you learned to add American/decimal ones in the fourth grade. Two 1’s is a zero, with a one carried over. It is that simple. (Just like in American, two 5’s is a zero, with the 1 carried over. 05 + 05 = 10.) For example, in binary:

001 + 001 = 010;

010 + 001 = 011; and

100 + 100 = 1000.

OK, now it gets tricky: how do you represent a negative number?

In 1945 John Von Neumann proposed using the 2’s complement method that neatly solves this problem. To get the negative equivalent of a number, you flip the bits (1 becomes 0 and vice-versa), then add 1, ignoring any overflow. If the highest bit is 1, then the number is negative. You can then add them to each other, and you don’t have to think about whether the result is positive or negative- it happens automatically. Whoa. When I first heard this, it blew my mind. I think that says something about me, which I am hoping is a good thing.

Examples: using 4 bits max, 0001 is just 1, same as before. All positive numbers stand for themselves. Flip the bits, yielding 1110, then add 1, and you have 1111 for -1. Note that if you add 1111 and 0001 together, you get zero, which would be correct; -1 and +1 added together are zero. (Carry the ones all the way out to the left and ignore the overflow.)

If you are still with me, which I deem unlikely, the next part of the Epochalypse story is to learn that Richie and Thompson, our fearless Unix inventors, chose to represent the number counting the seconds from 1/1/70 as a 32-bit 2’s complement binary number. This would have been the default in the computer language they were using (which they decided to invent as part of the project), and had the nice property that you could represent dates back to 1901 as well as dates moving forward.

Let’s all do the math on this: what is the largest positive number that can be represented by 32 bits when using 2’s complement format? I know you are all way ahead of me, but it is 2 31 -1, or 2x2x2… 31 times, minus 1. This turns out to be 2,147,483,647. This number of seconds is called the “Unix Epoch” and includes the dates forwards and backwards this number of seconds.

FYI, a Unix year is therefore 86,400 seconds with no leap second. There is a leap second every few years when not using Unix time. Because the rotational speed of the earth varies (I swear I am not making this up), leap seconds do not occur every year; there have been 27 added since 1972. I am hoping that the leap second thing isn’t going to be a problem for you, so let’s ignore it for the rest of this discussion.

At this point we have established that exactly 2,147,483,647 seconds from midnight UTC January 1, 1970, the time on any one of these Unix-derivative computers is going to overflow and go negative.

This will happen on January 19, 2038 at 3:14:07 UTC.

Oops! Any programs or computers that rely of comparing time references to make calculations might crash. (If the computer is part of a car, then the word ‘crash’ will take on new meaning.)

Recognizing this problem, many of these programs and systems have already been updated to use 64-bit numbers instead of 32-bit. A 64-bit number will not roll over until about 20 times longer than the estimated lifetime of the Universe. That should be enough, but feel free to do the math yourself. Since almost all desktop computers run Windows and not Unix, no worries about your personal machine. Places like Google, banks, airlines, and other big data centers are hip to this and have already fixed the problem by upgrading to 64-bit time. In fact, probably all the computers that really matter will be fixed by then.

So I am not really worried about it. Much.